In the evolving landscape of decentralized applications, fully homomorphic encryption (FHE) toolkits are unlocking new possibilities for small, skills-augmented AI models in private onchain compute. These compact models, enhanced with specialized capabilities, promise to deliver robust performance without the resource demands of frontier giants. Recent research underscores their potential to rival larger systems in targeted domains, making them ideal candidates for blockchain environments where data privacy is paramount.

Consider the computational barriers that have long plagued FHE: its intensive overhead rendered it impractical for complex AI workloads. Yet, as hardware accelerators and optimized frameworks emerge, small models - precisely tuned with domain-specific skills - sidestep these limitations. They enable private onchain AI inference, where encrypted data flows through smart contracts without ever exposing plaintext. This shift not only preserves confidentiality but also democratizes access to sophisticated analytics in Web3 ecosystems.

Skills-Augmented Models Redefine Efficiency in Blockchain AI

SkillsBench findings from early 2026 reveal a compelling truth: small language models (SLMs), when augmented with curated skills, match or exceed the output of much larger counterparts in specialized tasks. In healthcare diagnostics or financial risk assessment, these models leverage focused expertise - think pattern recognition honed for encrypted tabular data - rather than brute-force scaling. This approach aligns perfectly with FHE toolkits for small AI models, as their lighter footprint reduces bootstrapping cycles, a notorious FHE bottleneck.

Blockchain innovators stand to gain immensely. Traditional AI deployment onchain exposes user data to validators and adversaries; skills-augmented SLMs, processed via FHE, mitigate this entirely. Ethereum's ecosystem, with its maturing L2s, now supports such integrations, fostering encrypted AI dApps 2026 that operate seamlessly on user-submitted ciphertexts.

FHE Frameworks Tailored for Encrypted Model Training

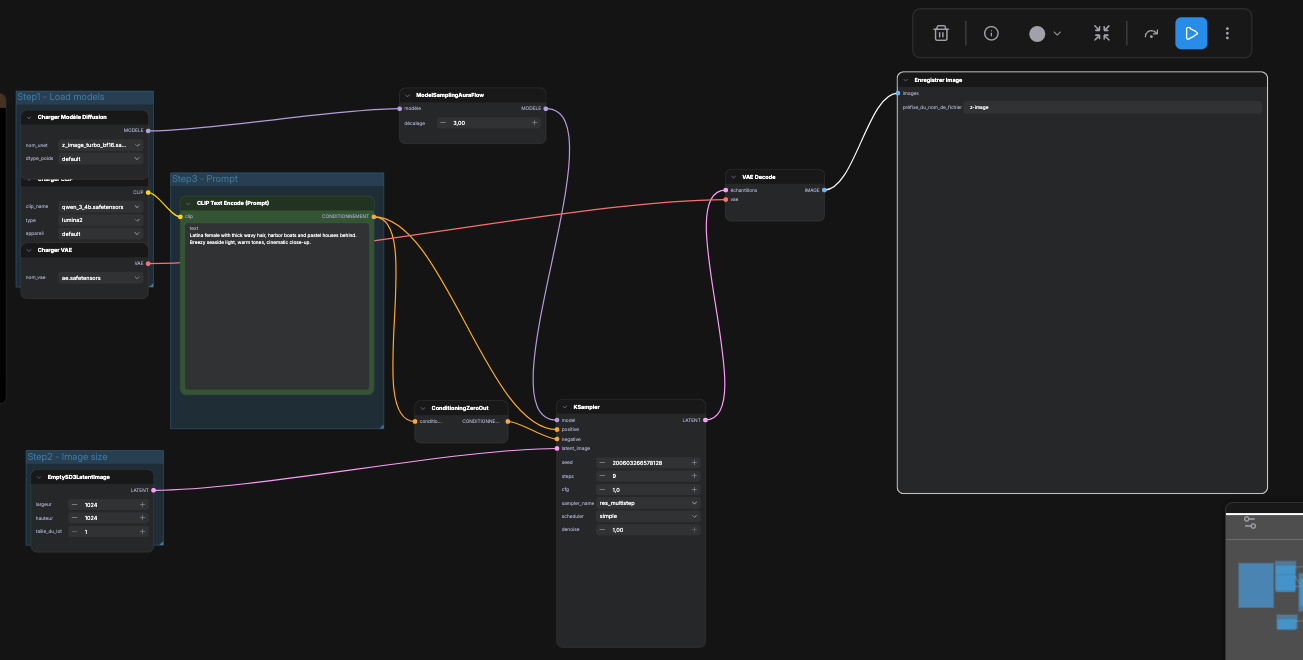

The FHAIM framework, launched in February 2026, marks a pivotal advancement. It facilitates training of marginal-based synthetic data generators directly on encrypted tabular datasets, outputting results with differential privacy. Empirical results show runtimes comparable to unencrypted baselines, a feat that opens doors for fine-tuning small models without decryption. Developers can now iterate on skills-augmented models blockchain applications, confident in data sovereignty.

Key FHAIM Benefits

- Encrypted Training: FHAIM enables training of marginal-based synthetic data generators on encrypted tabular data without decryption throughout the process. [arXiv]

- Differential Privacy Outputs: Releases synthetic data outputs with built-in differential privacy guarantees to protect individual privacy.

- Feasible Runtimes for Small Models: Achieves performance comparable to traditional methods with practical runtimes suitable for small, skills-augmented AI models.

- Ethereum Smart Contract Compatibility: Designed for private onchain compute, integrating with FHEVM-like environments for Ethereum blockchain deployment.

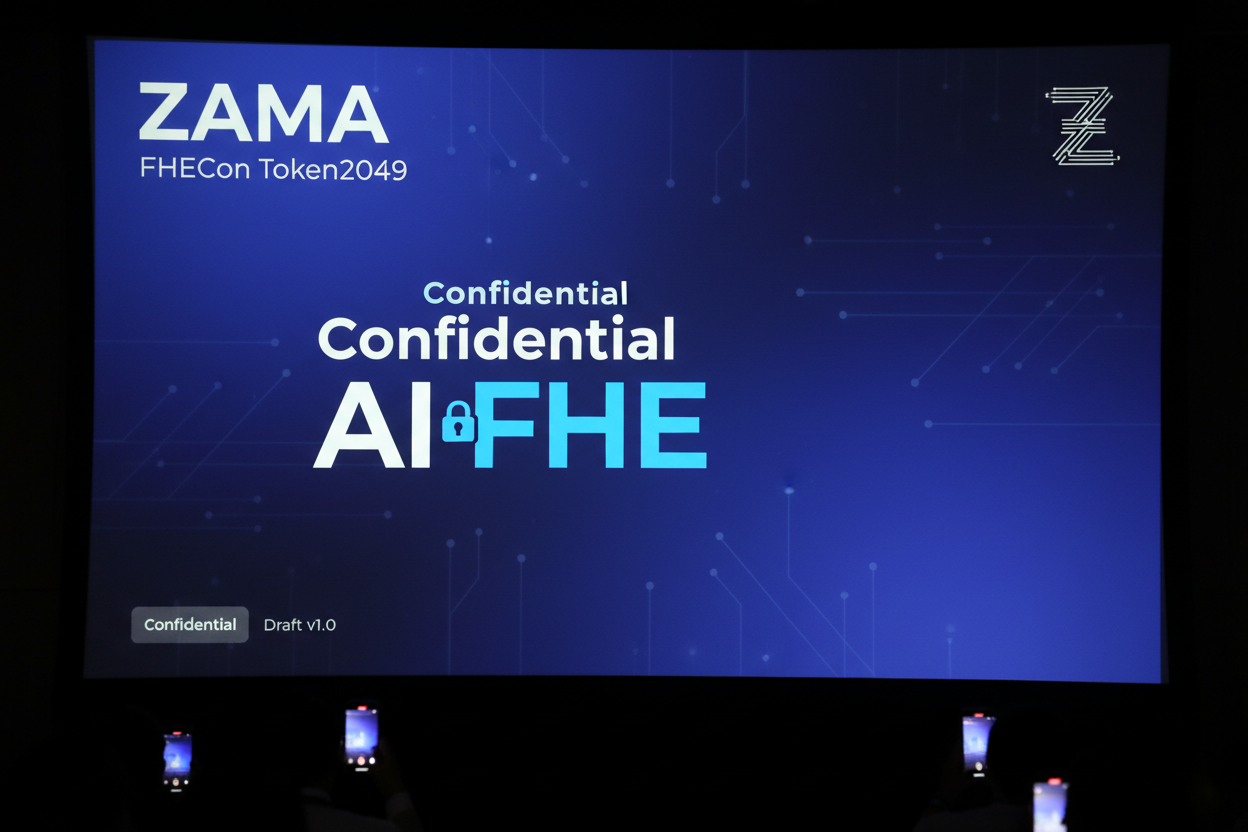

Complementing this, confidential AI fine-tuning initiatives allow organizations to enhance models using partner data streams, all under FHE's veil. No sensitive information leaves its encrypted state, addressing regulatory hurdles in finance and beyond. Zama's contributions, including their FHEVM toolkit, further streamline Zama FHE Ethereum integration, providing TypeScript libraries for seamless onchain deployment.

Hardware Breakthroughs Accelerate Real-Time Onchain Inference

FAB's FPGA-based accelerator for bootstrappable FHE schemes delivers outsized gains over CPU and GPU alternatives. By optimizing polynomial multiplications - the core of homomorphic operations - it slashes latency for small model inference. In a private compute scenario, this means AI agents analyzing encrypted trades or patient records in milliseconds, not minutes.

Imagine deploying a skills-augmented model for fraud detection on Ethereum, where transaction ciphertexts arrive encrypted and inferences return without a single bit exposed. FAB's design targets exactly these high-stakes, low-latency needs, positioning FHE as a viable backbone for private onchain AI inference. While software optimizations matter, hardware like this feels like the missing link - one that could propel small models from lab curiosities to production staples.

Open-Source Momentum: Zama and Beyond

Zama leads the charge with its FHEVM toolkit, a TypeScript powerhouse for Ethereum integration. Developers encrypt data client-side, ship it to smart contracts housing model logic, and retrieve encrypted predictions. This workflow sidesteps oracle vulnerabilities and validator snooping, core risks in today's DeFi and AI agents. Their developer program sweetens the deal: priority tooling access, certification paths, and partnerships that fast-track Zama FHE Ethereum integration. I've seen institutional teams pivot to these libraries, drawn by the blend of simplicity and cryptographic rigor.

Hanzo AI's 700-plus open-source repos add fuel, spanning AI infra to blockchain tools under permissive licenses. Pair these with SkillsBench insights, and you get SLMs that punch above their parameter count - ideal for onchain slots where gas fees punish bloat. Yet, here's a measured take: not every skill augmentation scales homomorphically. Curated datasets for finance or healthcare must prioritize noise tolerance, lest FHE's approximations degrade model fidelity.

Navigating Risks in the Wild

Enthusiasm tempers with reality. AI agents already probe Ethereum contracts for exploits, per recent reports, underscoring why privacy layers like FHE matter. Small models, lean as they are, still demand vigilant auditing - especially when skills involve adversarial training on encrypted inputs. ETHGlobal prizes spotlight this tension, challenging builders to craft SDKs for Arbitrum AI agents. Winners could define the next wave of encrypted AI dApps 2026, but only if toolkits bridge the gap between prototype and prime time.

From my vantage, the real edge lies in hybrid stacks: SLMs fine-tuned via FHAIM, accelerated by FAB, orchestrated through Zama's stack. This isn't hype; it's a pragmatic pivot. Blockchain Council notes SLMs' classroom practicality, but extend that to onchain oracles predicting yields on encrypted portfolios, or healthcare dApps scoring risks without HIPAA breaches. Developers gain sovereignty, users reclaim privacy, and chains evolve beyond transparent ledgers to confidential compute hubs.

FHEToolkit. com distills these threads into actionable libraries and tutorials, optimized for Ethereum L2s. Whether bootstrapping your first homomorphic inference or scaling skills-augmented ensembles, the toolkit equips you for what's next. As macroeconomic pressures squeeze centralized AI costs, decentralized alternatives sharpen. Patience here pays: deploy small, encrypt thoroughly, and watch privacy compound.

No comments yet. Be the first to share your thoughts!